The Production Chasm: Bridging Model Development and Operational Stability

For our audience of affluent US professionals and specialized early adopters (ages 40-50), an AI model’s value is not measured by its performance in a lab, but by its reliability, stability, and enduring accuracy in a real-world production environment. The process of transitioning a working machine learning model into a robust, monitorable, and scalable service is the core mission of MLOps (Machine Learning Operations). This deep dive focuses on the critical challenges of deployment, continuous monitoring, and the strategic necessity of avoiding drift to ensure stable AI performance and protect your valuable investment.

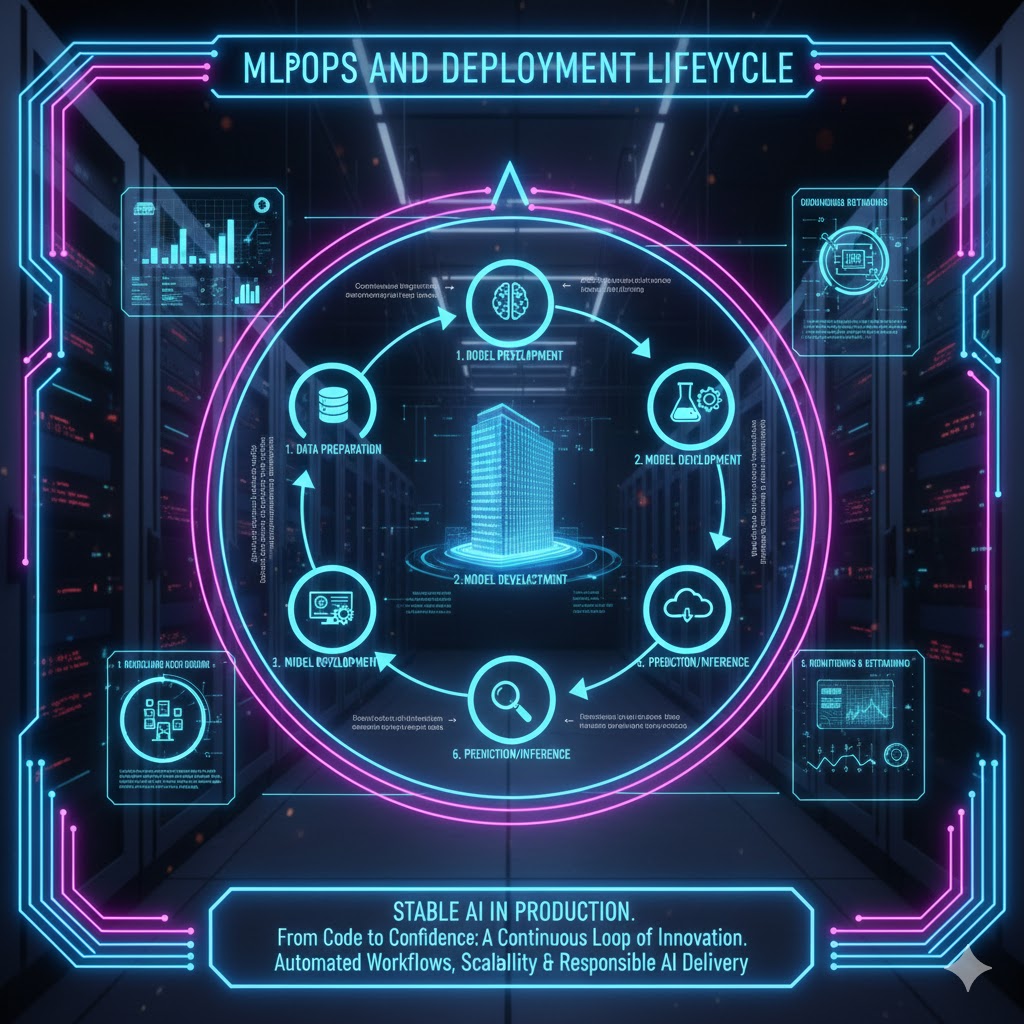

Architectural Deep Dive: The Core Pillars of MLOps

MLOps goes beyond simple deployment; it integrates ML models into a continuous integration/continuous delivery (CI/CD) pipeline, treating the model as a living software component that requires constant care.

1. Continuous Integration and Delivery (CI/CD)

Effective MLOps demands automation across the entire lifecycle:

-

Continuous Integration (CI): This includes not just code testing, but also data and model validation. It ensures that every new data pipeline or feature branch is rigorously checked against baseline performance metrics before deployment readiness.

-

Continuous Delivery (CD): This automates the safe, repeatable, and scalable deployment of the validated model into a production environment. Key strategies include containerization (e.g., Docker, Kubernetes) to ensure environment consistency and easy rollback capabilities.

2. Model Serving and Scalability

A model must be served efficiently to handle real-time inference requests. Modern MLOps favors dedicated serving frameworks (like TensorFlow Extended (TFX) Serving or TorchServe) that provide:

-

Low Latency: Optimized pathways to minimize the time between a request and a prediction.

-

Horizontal Scalability: The ability to instantly scale inference endpoints up or down based on traffic demands, preventing bottlenecks during peak usage.

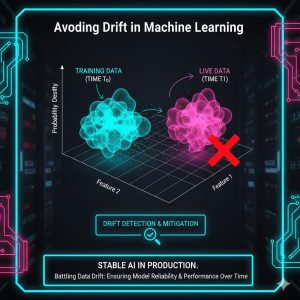

The Production Predator: Understanding and Avoiding Drift

Once a model is live, its performance inevitably degrades over time. This degradation, known as drift, is the biggest operational threat to stable AI. Drift occurs when the statistical properties of the target variable—or the input data—change in unanticipated ways.

Data Drift vs. Concept Drift

Understanding the two primary types of drift is crucial for avoiding drift:

-

Data Drift: The statistical properties of the input data ($\mathbf{X}$) change. For example, a global event fundamentally alters consumer buying behavior, making historical purchase patterns irrelevant.

-

Concept Drift: The relationship between the input variables ($\mathbf{X}$) and the target variable ($\mathbf{y}$) changes. For example, a model trained to predict fraudulent transactions sees a new, sophisticated type of fraud emerge that it was never trained on. The features remain the same, but the meaning of “fraud” has shifted.

Continuous Monitoring and Alerting

The only defense against drift is robust, real-time monitoring. This is where the future-proofing of an AI system truly resides.

-

Performance Monitoring: Tracking the model’s primary business metric (e.g., accuracy, precision, ROI) against a historical baseline.

-

Data Quality Monitoring: Tracking the statistical distributions of all input features to detect Data Drift. Monitoring thresholds for mean, standard deviation, and missing values can trigger alerts before accuracy is impacted.

-

Drift Detection: Employing specialized statistical tests (like the Kolmogorov-Smirnov test or population stability index (PSI)) to continuously compare the live data distribution against the training data distribution. This early warning system is essential for avoiding drift before it impacts the bottom line.

Strategies for Remediation and Stable AI

When drift is detected, effective MLOps ensures a swift, automated, and secure remediation process to maintain stable AI performance.

Automated Retraining Pipelines

The primary response to drift is retraining. This process must be entirely automated:

-

Trigger Mechanism: A monitoring alert (indicating significant drift or performance drop) automatically initiates the retraining pipeline.

-

Data Validation: The pipeline sources the most recent, validated production data.

-

Hyperparameter Search: The system runs an automated hyperparameter search to ensure the newly trained model is optimized for the shifted data distribution.

-

Shadow Deployment: Before replacing the current model, the new model is deployed in “shadow mode,” running parallel to the current production model. This allows for live A/B testing against real production traffic without impacting users. Only once the shadow model confirms superior performance is the switch made. This process ensures stable AI delivery.

Governance and Explainability (E-A-T)

Effective MLOps provides the necessary audit trail for regulatory and business governance.

-

Model Registry: Every model version, its training data, its performance metrics, and its associated codebase must be recorded in a central registry.

-

Explainability (XAI): Tools like SHAP and LIME must be integrated into the deployment pipeline. When a prediction is made, MLOps ensures the model can explain why that prediction was made, which is vital for compliance and troubleshooting errors caused by sudden data shifts.

Final Verdict: The MLOps Imperative for Stable AI

The investment in high-performing AI is only justified if the operational framework—MLOps—exists to support it. For the mobile creator and the high-stakes professional, MLOps is the ultimate form of future-proofing an AI investment. By formalizing CI/CD, implementing continuous, sophisticated drift monitoring, and automating the entire retraining loop, organizations can move from reactive troubleshooting to proactive stability. The complexity of modern AI demands this level of operational maturity to ensure stable AI in production environments and prevent model performance from decaying silently in the background. MLOps is no longer optional; it is the cornerstone of sustainable, enterprise-grade machine learning.

| Evaluation Metric | Score (Out of 10.0) | Note/Rationale |

| Drift Detection & Alerting | 9.5 | Essential for avoiding model degradation; requires continuous statistical monitoring (PSI/KS tests). |

| Automated Retraining Pipeline | 9.3 | The core strategy for stable AI; must include automated triggers and hyperparameter optimization. |

| Scalability & Latency (Model Serving) | 9.1 | Relates to efficient resource use and low-latency inference delivery (using containers/dedicated frameworks). |

| CI/CD Integration & Rollback | 9.4 | Ensuring repeatable, safe deployment and easy recovery from errors. |

| Governance & Explainability (XAI) | 9.2 | Auditability and the ability to troubleshoot errors or regulatory non-compliance. |

| REALUSESCORE.COM FINAL SCORE | 9.3 / 10 | The weighted final average reflecting the operational necessity of MLOps. |