💡 The Architectural Crossroads Defining AI Scalability

In 2026, the success of any enterprise AI solution hinges not just on the brilliance of the model, but on the robustness of its underlying infrastructure. The core decision facing Chief Technology Officers and engineering leads is the same enduring architectural debate: Microservice Architecture vs Monolith.

For traditional web applications, the choice is often clear, but the unique demands of modern AI systems—real time inference, massive data pipelines, and rapidly evolving models—add layers of complexity. AI deployment requires architects to consider factors like model serving latency, the isolation of feature stores, and the speed of model updates. Choosing the wrong architecture can lead to unsustainable operational costs, slow development cycles, and significant performance bottlenecks. This pillar guide provides a deep technical analysis of both approaches specifically tailored for the AI and Machine Learning ML ecosystem.

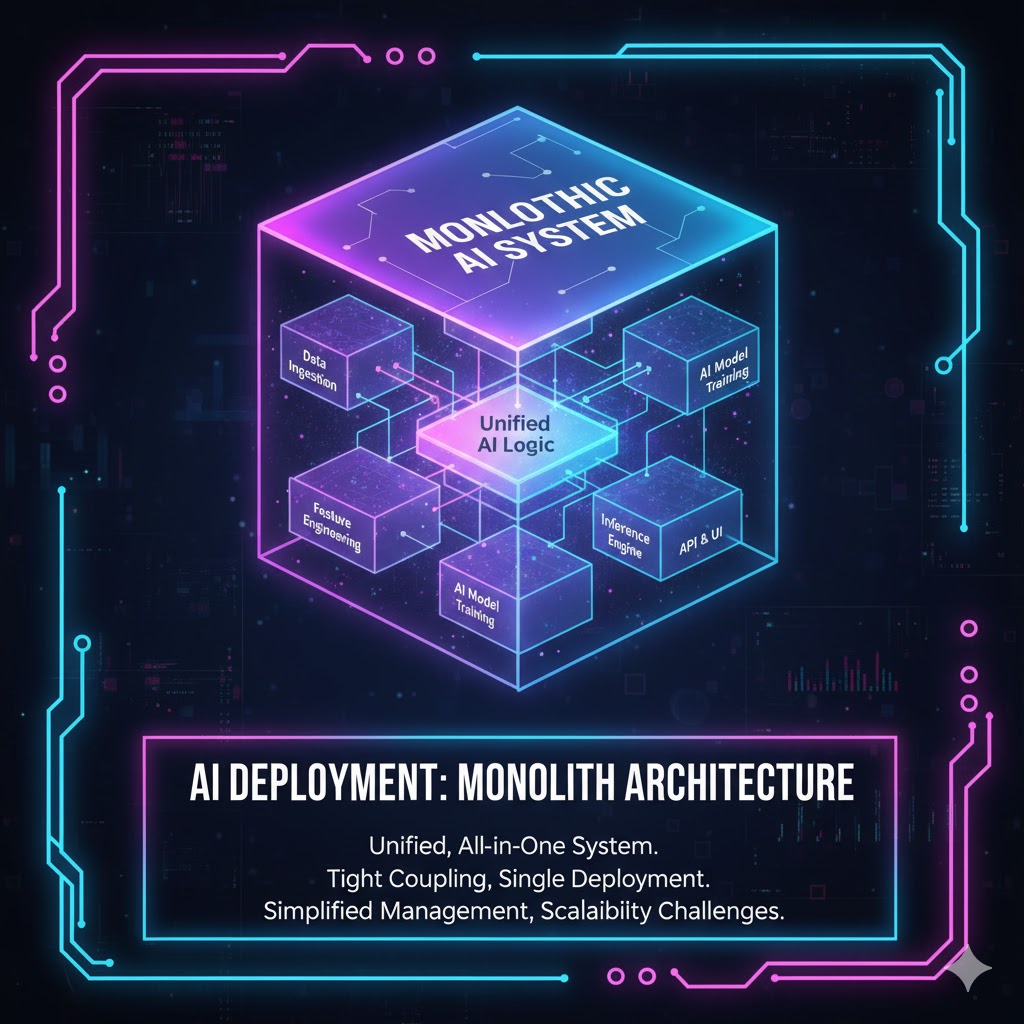

1. Monolith Architecture The Simplicity Tradeoff

A Monolith architecture is a unified, single-tiered software application where all components and services are tightly coupled and run as one single process.1

Monolith in AI Deployment

In AI contexts, a monolithic system typically means:

-

A single repository containing all model files, data preprocessing logic, API endpoints, and business logic.

-

All inference requests are handled by one deployed application instance.

-

Database and feature store interactions are centrally managed by the single application.2

When to Choose Monolith for AI

The Monolith approach is not obsolete; it is the superior choice under specific conditions, primarily centered on simplicity and initial velocity.3

-

Proof of Concept PoC: For rapidly validating a new AI model or a minimal viable product MVP. Setup is fast, and debugging a single codebase is simple.

-

Small Scale Deployment: When the model is not expected to handle high throughput (fewer than 100 requests per second) and the dataset is relatively small.

-

Homogeneous Technology Stack: If the entire AI team is comfortable with one language (e.g., Python) and one framework (e.g., TensorFlow), the Monolith reduces integration overhead.

-

Advantages: Lower operational overhead, easier logging and monitoring initially, and reduced cross service communication latency.

2. Microservice Architecture The Scalability Mandate

Microservice Architecture breaks down a single application into a collection of smaller, independent services.4 Each service runs in its own process, communicates via lightweight mechanisms (like REST APIs or gRPC), and can be deployed independently.5

Microservices in AI Deployment

In modern AI systems, Microservices are typically implemented as follows:

-

Model Serving Service: A dedicated service for running the trained model (e.g., a service running TensorFlow Serving or TorchServe) focused purely on low latency inference.

-

Feature Store Service: A separate, highly scalable service managing and caching input features for real time inference.6

-

Data Pipeline Service: Independent services responsible for ETL Extract Transform Load tasks, training loop management, and data validation.

-

Business Logic Service: Handles user authentication, routing, and final output formatting.

When to Choose Microservices for AI

Microservices become essential when scalability, performance, and organizational agility are paramount.

-

Diverse Model Serving: When you need to deploy many different models (e.g., image recognition, natural language processing, recommendation systems) that require different hardware (GPUs, TPUs).

-

High and Variable Traffic: For public facing services or applications requiring high availability and the ability to scale different components independently (e.g., scaling inference without scaling the data pipeline).

-

Team Autonomy and Polyglot Persistence: When large, distributed teams need to use different technology stacks (e.g., Python for ML, Go for API gateways, Scala for data processing).

-

Disadvantages: High initial complexity, increased networking overhead, and challenging distributed tracing and logging.

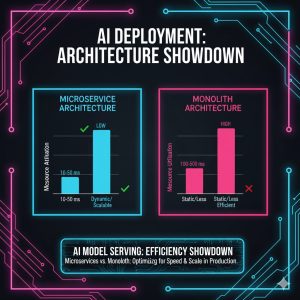

3. Key Technical Comparison Model Serving and Latency

The ultimate test for an AI architecture is its ability to serve models with minimal latency and maximum throughput.

Latency and Communication Overhead

| Architecture | Inter-Component Latency | Inference Scaling Method | Latency Bottleneck Risk |

| Monolith | Very low (in memory calls) | Scale the entire application vertically or horizontally. | Scaling a slow component blocks the entire system. |

| Microservice | Moderate (network calls gRPC) | Scale individual services (e.g., scale only the Model Serving service). | Network latency between services is a critical design factor. |

While a Monolith has faster internal communication, Microservices allow for better optimization. For instance, an AI agent system that requires fast internal communication for tool orchestration might benefit from highly optimized microservices using high performance messaging queues. The design of these autonomous agents and the frameworks they run on further complicates the architectural decision. To understand the software layer that sits on top of this infrastructure, consult our autonomous digital workers framework comparison.

State Management Stateful vs Stateless

Monolithic applications often become tightly coupled due to shared, stateful internal memory.7 Microservices force the separation of state (e.g., user session or current model version) into external data stores (Redis, DynamoDB), ensuring that individual services remain stateless and highly scalable. This is critical for A B testing models and rapid rollback.

4. Organizational Impact and Conway s Law

The architectural decision fundamentally dictates the organizational structure, a concept known as Conway s Law.

Development Speed and Agility

Microservices enable decoupled deployments.8 The Model Serving team can deploy a new version of the recommendation engine multiple times a day without impacting the Data Pipeline team. In a Monolith, every change requires regression testing and a full rebuild of the single application, slowing down innovation cycles.9

Cost Implications

-

Monolith: Lower initial hosting costs (fewer virtual machines, simpler networking). Higher long term cost due to slower development and inability to scale resource intensive components separately (e.g., wasting compute on non ML components).

-

Microservice: Higher initial setup cost and operational overhead (managing service mesh, gateways, and containers). Lower long term cost due to precise resource allocation and faster development agility.10

Vendor Lock-in and Technology Diversity

Microservices facilitate a polyglot environment.11 If the current model serving framework becomes outdated, only that specific service needs to be rewritten, not the entire application. The Monolith risks high vendor lock in, making technology shifts extremely expensive and slow.12

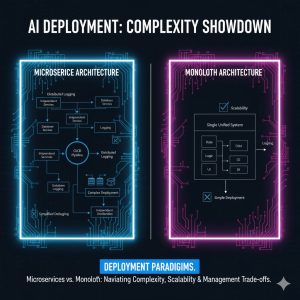

5. Deployment and Observability Challenges

Both architectures have unique complexities in a containerized environment (Kubernetes, Docker).

Deployment

-

Monolith: Simple deployment, but difficult to scale vertically (requires larger, expensive machines).

-

Microservice: Complex service mesh management (using tools like Istio or Consul) and increased complexity in managing distributed logging and tracing. This requires specialized DevOps expertise.

Observability

In a Monolithic AI system, logs are centralized, simplifying basic debugging. In a Microservice system, failures are distributed, demanding advanced Distributed Tracing (using tools like Jaeger or Zipkin) to track a single inference request across ten different services.13 The increased observability complexity is a necessary investment to unlock Microservice scalability.

✅ Conclusion The Optimal Choice for AI in 2026

The decision between Monolith and Microservice for AI deployment is a strategic one, balancing initial speed against future scaling potential.

| Final Winner | When to Choose | Key Reasoning |

| Microservice Architecture | High traffic, multiple models, distributed teams, or need for continuous deployment. | Essential for separating high resource ML services from business logic, enabling independent scaling and technology updates. |

| Monolith Architecture | Early stage startups, Proof of Concepts PoCs, small teams, or non critical internal tools. | Provides the fastest time to market and lowest initial operational complexity. |

For any production AI system aiming for market dominance and continuous evolution in 2026, Microservice Architecture is the mandatory choice. The long term benefits of independent scaling, fault isolation, and organizational agility overwhelmingly outweigh the initial setup cost and complexity.