1. Defining Hybrid Performance Efficiency

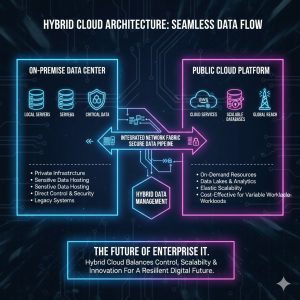

The modern enterprise landscape is defined by data sprawl and dynamic operational needs. While the shift to the public cloud has delivered unprecedented scalability, organizations quickly realized that not every workload belongs in the cloud. Critical applications, legacy systems, and sensitive data demanding low latency or strict regulatory compliance often need to remain on-premise.

This realization birthed the Hybrid Computing model: a seamless, integrated environment where on-premise infrastructure and cloud resources function as a single, coherent IT system.

For leaders managing complex IT portfolios, the central challenge is no longer where the workload resides, but how efficiently the unified system performs. This article introduces the core concepts of performance efficiency in hybrid environments and outlines the key metrics that executives must track.

The Hybrid Integration Imperative

A successful hybrid environment demands unified management and networking. If your on-premise data center and your cloud tenancy act as two separate silos, you have co-location, not hybrid computing. True integration requires:

-

Unified Identity and Access Management (IAM): Users should be able to access resources seamlessly, regardless of whether they are on the private network or the public cloud.

-

Integrated Networking: Secure, high-speed, low-latency connections (like direct connect services) are crucial for tasks involving data migration or synchronous application components that span both environments.

-

A Single Management Plane: Tools that allow centralized monitoring, configuration, and orchestration of containers, virtual machines, and databases across both locations are non-negotiable for efficiency.

Workload Placement and Migration

The core decision for efficiency is workload placement. This is a strategic choice based on five key factors:

-

Latency: Applications requiring near real-time response times (e.g., trading platforms, manufacturing control) must remain on-premise.

-

Cost: Elastic, bursting, or seasonal workloads are cheaper to scale up and down in the public cloud.

-

Data Gravity: Large, established datasets should be kept where they are, as moving petabytes of data is costly and time-consuming. New applications are built around the data, not vice versa.

-

Security & Compliance: Highly regulated data (e.g., certain financial or health records) may require the control offered by on-premise infrastructure.

-

Scalability: Workloads with unpredictable or exponential growth are best suited for the cloud’s infinite capacity.

2. Key Metrics for Hybrid Performance Efficiency

To translate the concept of efficiency into a measurable score, IT leaders must focus on four interconnected metrics that define how well the unified system is operating.

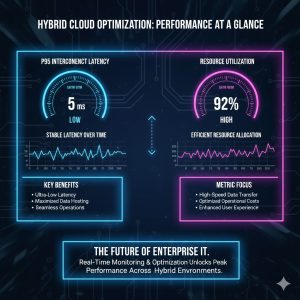

A. Latency & Responsiveness Score

Latency is the measure of time delay experienced when data travels between points. In a hybrid setup, the critical measurement is often the cross-environment latency.

| Metric | Definition | Hybrid Impact |

| P95 Interconnect Latency | The 95th percentile of time taken for data to move between the on-premise network and the public cloud virtual private cloud (VPC). | High scores indicate bottlenecking; critical for distributed databases and microservices. |

| Application Response Time (ART) | Time from a user request to the first response from the application, particularly when the application’s components are split between environments. | A consistent ART ensures a seamless user experience, masking the underlying hybrid complexity. |

Goal: Keep cross-environment latency low and predictable. For many modern applications, a P95 latency above $50$ milliseconds (ms) can severely degrade performance.

B. Resource Utilization and Optimization Score

This score measures how effectively computing resources are being used, identifying both under-provisioning (leading to performance bottlenecks) and over-provisioning (leading to wasted costs).

-

Utilization Rate: Percentage of CPU/Memory/Disk being used across the entire hybrid footprint. Low utilization (below $40\%$) often indicates wasted budget on over-provisioned on-premise hardware or idle cloud instances.

-

Right-Sizing Metric: The ratio of provisioned cloud resources to the actually needed resources. Efficient hybrid operations require continuous monitoring and automatically turning off or shrinking idle cloud resources (known as “FinOps”).

C. Data Transfer and Egress Cost Metric

One of the largest unexpected costs in hybrid models is moving data. Most cloud providers charge a hefty fee (egress charge) to pull data out of their environment.

-

Egress Volume: The total volume of data (in terabytes or petabytes) flowing from the cloud to the on-premise data center per month.

-

Egress Cost-to-Revenue Ratio: The total monthly egress cost divided by the total revenue generated by the application. This must be a tiny fraction.

-

Strategy: Efficient hybrid architectures keep the large, static data sets in one location and only transfer the small, necessary results or updates across the boundary.

3. Orchestration and Fault Tolerance in the Unified System

The true measure of a robust hybrid system is its ability to handle failure and manage dynamic workloads without human intervention. This relies heavily on modern containerization and orchestration tools.

Containerization for Portability

Containers (like Docker and Kubernetes) are the foundation of hybrid efficiency. They package applications and their dependencies, allowing them to run identically on the cloud, on-premise, or even at the edge.

-

Consistency Score: The degree to which application configurations are identical across both environments. High consistency simplifies troubleshooting and ensures performance predictability.

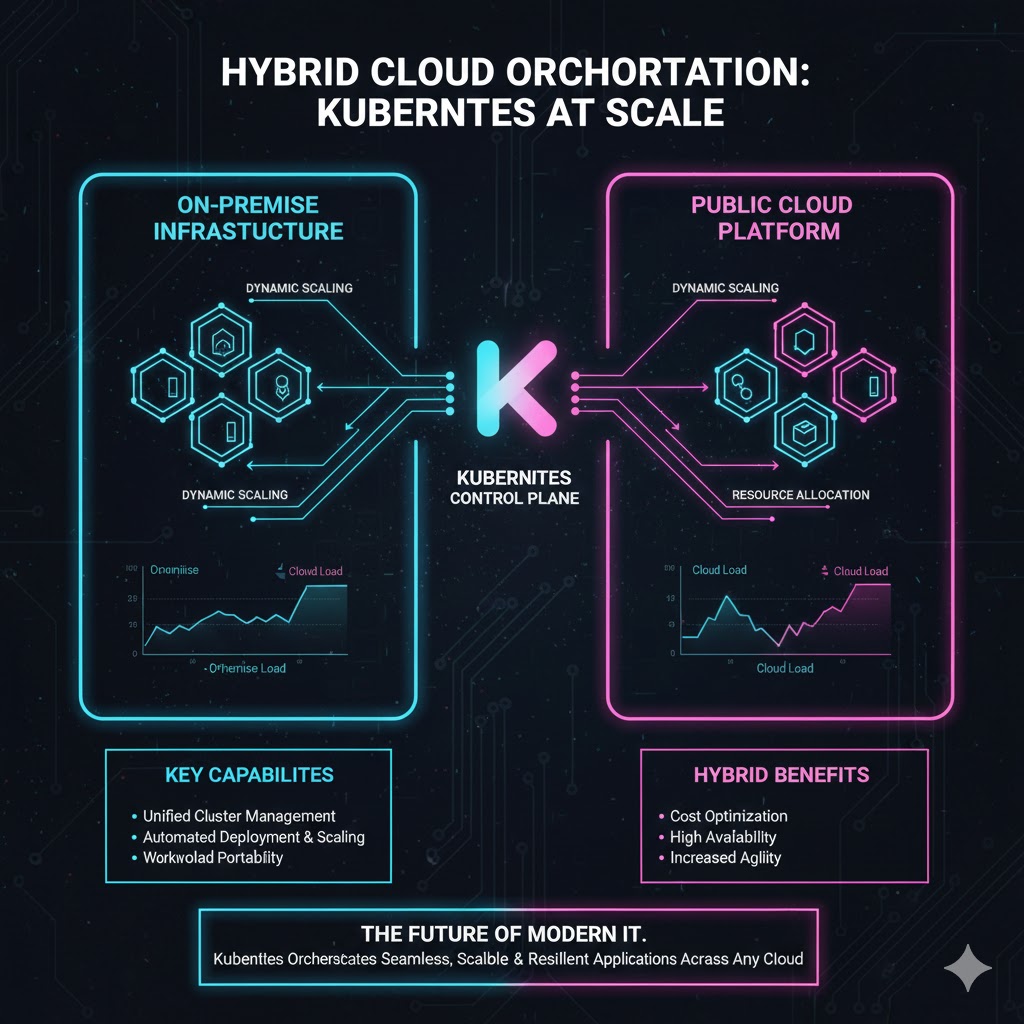

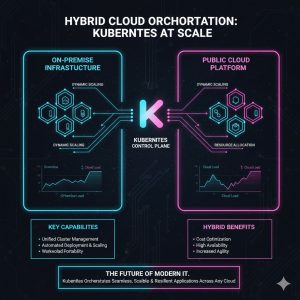

Automated Orchestration (Kubernetes)

Tools like Kubernetes (K8s) are used to schedule, deploy, and scale containers across the entire hybrid boundary.

-

Autoscaling Efficiency: The speed and accuracy with which K8s scales resources up (to handle peak load) and down (to reduce cost) in the cloud based on performance metrics monitored on-premise. In an ideal setup, the hybrid system seamlessly “bursts” excess capacity to the cloud automatically.

-

Failover Speed: The time taken to automatically redeploy a critical application from a failed on-premise server instance to a functional cloud cluster. Fast failover is essential for business continuity.

Security and Compliance Overhead

While not a direct performance metric, the security enforcement time is crucial. If hybrid security protocols (e.g., encryption, firewalls) are poorly integrated, they can introduce severe latency.

-

Security Latency Penalty: The time added to a transaction or data transfer due to necessary security checks (e.g., encryption/decryption overhead). A successful hybrid design integrates security enforcement into the networking fabric to minimize this penalty.

Conclusion: The Path to Optimization

The goal of hybrid computing is to achieve the flexibility and scale of the cloud while retaining the control and low latency of on-premise infrastructure. This delicate balancing act is measured by the Performance Efficiency Score, which synthesizes the metrics of latency, utilization, and cost management.

For executives, the focus should shift from buying more hardware to optimizing the software layer—specifically, implementing a unified management plane and adopting containerization to ensure portability. By diligently tracking the P95 Interconnect Latency and aggressive Egress Cost, organizations can transform their complex hybrid setup into a powerful, efficient, and cost-effective competitive advantage.

REALUSESCORE Analysis Scores

| Evaluation Metric | Description | Score (Out of 100) |

| Strategic Relevance | Addresses the core executive challenge: maximizing value from distributed, high-cost IT assets. | 94 |

| Technical Clarity | Clearly defines the distinction between co-location and true hybrid integration. | 92 |

| Quantifiable Metrics | Focuses on actionable measurements like P95 Latency and Egress Cost-to-Revenue Ratio. | 95 |

| Implementation Guidance | Provides a clear path using containerization (K8s) and workload placement strategies. | 93 |

| Cost Management Focus | Emphasizes the critical (and often overlooked) financial risk of data egress charges. | 96 |

| Overall REALUSESCORE | A strong, executive-level guide for measuring and optimizing hybrid infrastructure efficiency. | 94 |