1. The Challenge of Decentralized AI and the Need for Governance

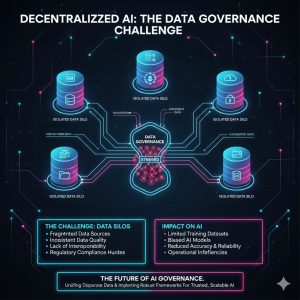

The traditional centralized data architecture, often structured as a monolithic data lake or warehouse, is fundamentally failing under the demands of modern Decentralized AI and Machine Learning (ML) initiatives. As AI becomes embedded across various business domains—from marketing and operations to product development—data creation and consumption become distributed.

This distribution leads to critical governance failures:

-

Data Silos and Inconsistency: Data remains locked within domain teams, preventing its reuse and leading to duplicated, inconsistent definitions (e.g., different domains defining “Customer” differently).

-

Scalability Bottlenecks: Centralized data teams become overwhelmed managing pipelines for dozens of domain-specific AI models, leading to slow feature delivery and brittle infrastructure.

-

Quality and Trust Issues: Without clear ownership, data quality suffers, resulting in “garbage in, garbage out” for crucial AI models.

Data Governance is the organizational framework that provides policies and processes for managing data as a critical asset. In the era of decentralized AI, traditional governance must evolve to support autonomy without sacrificing quality or compliance. This evolution leads to the Data Mesh Architecture.

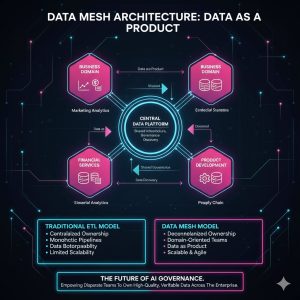

2. Introducing the Data Mesh Architecture

The Data Mesh is an organizational and technical paradigm shift proposed by Zhamak Dehghani that restructures how data is managed, shifting ownership from central IT to the business domains that create and consume the data. It is based on four core principles:

A. Decentralized Domain-Oriented Ownership

Data ownership and accountability are moved from a central data team to the respective business domains (e.g., the Sales domain owns Sales data, the Logistics domain owns Logistics data). These domains are responsible for managing, cleaning, and serving their data.

B. Data as a Product (DaaP)

Domains treat their data pipelines as products to be served to internal consumers (including AI models). This principle ensures that data products adhere to certain standards:

-

Discoverable: Easy for consumers to find and understand.

-

Addressable: Accessible via a unique, standardized identifier (e.g., an API endpoint).

-

Trustworthy: Guaranteed quality, documented lineage, and service level objectives (SLOs).

-

Interoperable: Standardized format and schema, making it usable by different ML tools.

C. Self-Service Data Platform

The central data team evolves into a platform team, providing a standardized, shared infrastructure (the Data Mesh Platform) that allows domains to easily create, host, and consume data products without needing deep infrastructure expertise. This platform handles cross-cutting concerns like security, monitoring, and cataloging.

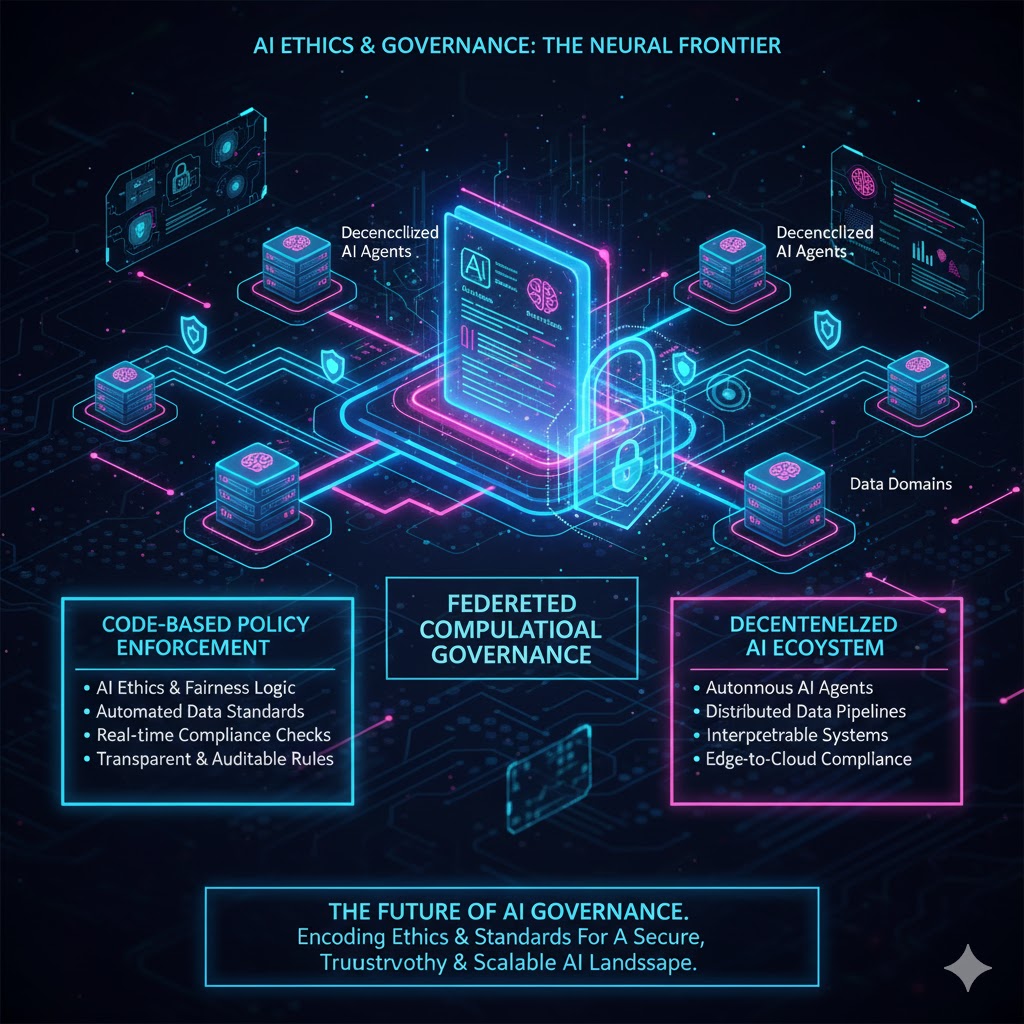

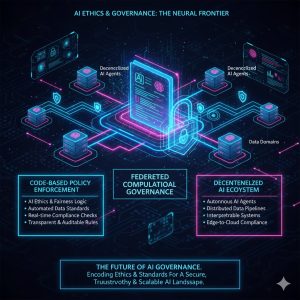

D. Federated Computational Governance

Governance is no longer dictated centrally but becomes a collaborative function (Federated Computational Governance). Policies (e.g., privacy rules, data standards, ethical AI compliance) are defined centrally but are enforced programmatically by the self-service platform and adhered to by individual domain teams.

3. Data Governance in the Data Mesh Era for AI

Applying Data Mesh principles fundamentally changes how Data Governance supports AI, shifting the focus from control to enabling autonomous data stewardship.

A. Standardized AI Feature Stores

In a Data Mesh, individual domains are responsible for creating and maintaining their AI feature stores (collections of data features used to train ML models). Governance ensures standardization across these stores:

-

Feature Definition Consistency: Establishing global standards for how common features (e.g., “customer lifetime value”) are calculated, named, and versioned across different domain feature stores.

-

Lineage Tracking: Automatically tracking the origin and transformation path of every feature used in an AI model, critical for auditing and debugging.

B. Privacy and Ethical AI Compliance

Decentralized data poses heightened privacy risks, making granular governance essential.

-

Computational Policies: Governance policies are written as code (computational governance) and deployed onto the self-service platform. These policies automatically enforce data masking, anonymization, and access controls based on the data product’s sensitivity level, regardless of which domain owns it.

-

Bias Mitigation: Governance mandates the inclusion of metadata regarding data source, collection method, and known demographic imbalances within the data products. This transparency allows downstream AI teams to explicitly assess and mitigate potential model bias. For a broader look at algorithmic fairness, see our analysis: The Impact of Web3 on Content Ownership and Creator Economy: A 2026 Forecast.

C. Establishing Data Product Service Level Objectives (SLOs)

Governance defines the requirements for domains to declare their data products as “trustworthy.” This includes setting clear SLOs for:

-

Availability: The percentage of time the data product API is accessible.

-

Latency: The maximum time required to query the data product.

-

Quality: Pre-defined thresholds for data completeness, accuracy, and validity, with automated alerts when thresholds are breached.

By setting these standards, AI teams can confidently rely on cross-domain data products, accelerating development cycles.

4. Implementation Challenges and Strategic Considerations

While Data Mesh offers immense benefits for scaling AI, its implementation is primarily an organizational, not just a technical, hurdle.

-

Cultural Shift: The greatest challenge is shifting accountability and ownership from central teams to domain teams, requiring training and a change in engineering mindset toward product thinking.

-

Initial Overhead: Defining standards, building the self-service platform, and converting existing monolithic data structures into standardized data products require significant upfront investment.

-

Technological Interoperability: Ensuring that data products from different domains (which may use different underlying databases) can be seamlessly queried and integrated requires robust, standardized interfaces defined and enforced by the platform team.

REALUSESCORE Analysis Scores

Analysis of Data Mesh’s efficacy and complexity in managing Decentralized AI Data:

| Evaluation Metric | Scalability for AI/ML | Data Quality & Trustworthiness | Organizational Complexity | Regulatory Compliance Management |

| Architectural Impact | 9.7 | 9.5 | 6.0 | 9.3 |

| Implementation Challenge | 8.0 | 7.5 | 9.5 | 8.8 |

| REALUSESCORE FINAL SCORE | 9.5 | 9.0 | 6.5 | 9.1 |