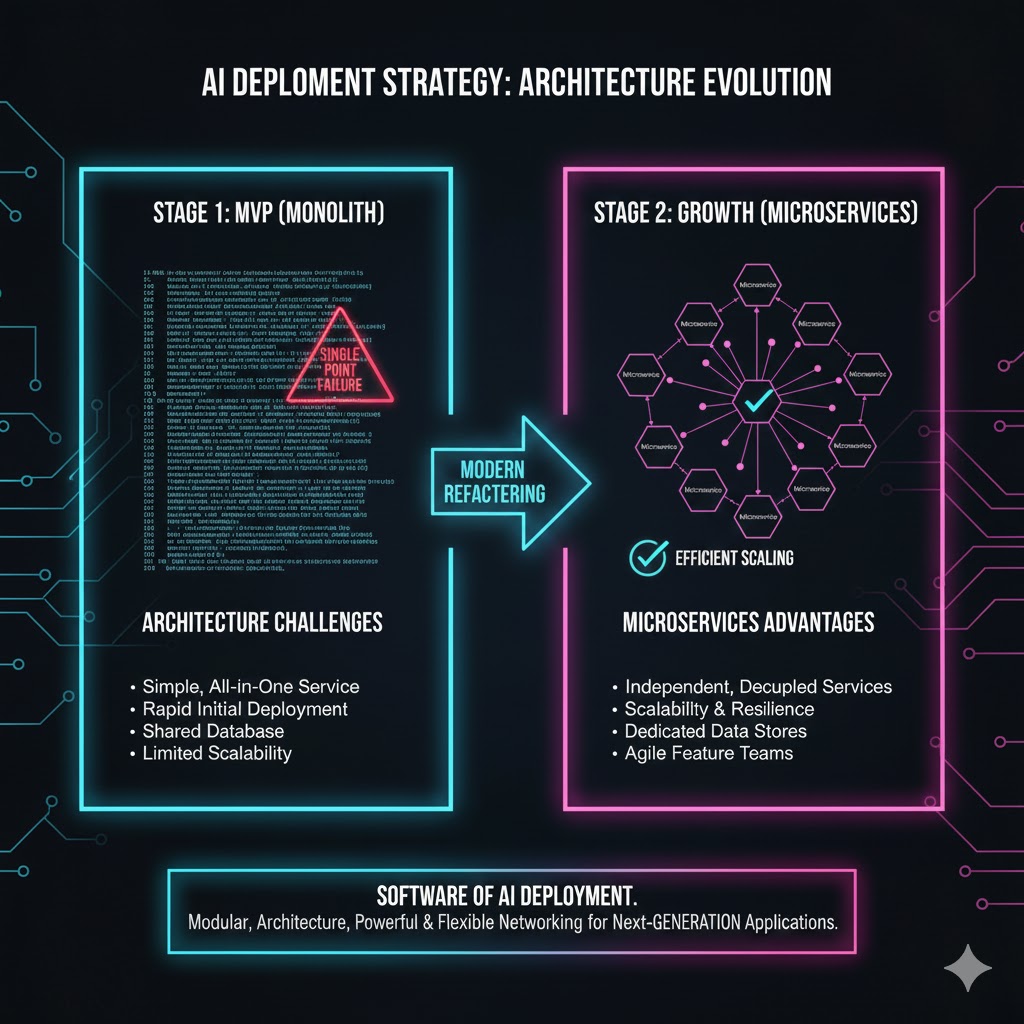

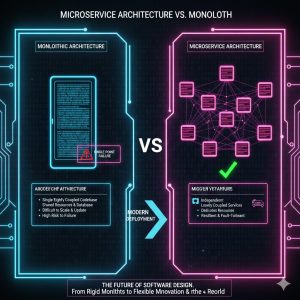

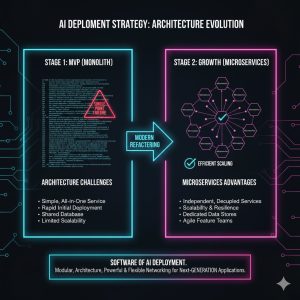

The Fundamental Trade-Off: Simplicity vs. Scalability in AI

For technology leaders and developers building cutting-edge applications, the architectural decision between a Monolith and Microservices is one of the most critical choices, especially when deploying complex AI Deployment systems. The choice hinges on a fundamental trade-off: Monoliths optimize for speed, simplicity, and low initial cost, while Microservices optimize for independent scaling, agility, and technological flexibility.1 For an AI application, which often includes resource-intensive models alongside standard business logic, this decision determines long-term costs, team efficiency, and the ability to scale core features independently.

1. Monolithic Architecture: Speed, Simplicity, and Initial Clarity2

A Monolith is built as a single, unified codebase where all components—UI, business logic, databases, and the AI model—are tightly integrated and deployed together as one unit.3

Pros for AI Deployment (The Case for Monolith)

-

Fast Development and MVP (Minimum Viable Product): For early-stage startups or proof-of-concept AI features, a monolith is the fastest path to deployment 1.2, 3.1.4 Engineers focus on product features, not network coordination.5

-

Lower Initial Cost: Monoliths require less specialized DevOps expertise, simpler infrastructure (fewer servers, one database), and lower initial operational overhead 3.1, 4.2.6

-

Easier Debugging and Testing: With all code and logs in one place, tracing a request and finding a bug—even one crossing the AI model boundary—is straightforward, eliminating the complexity of distributed tracing 1.1, 3.4.7

-

Low Latency (In-Process Calls): The entire application runs within a single process.8 Communication between the user-facing API and the AI inference engine is extremely fast, minimizing network latency that can degrade real-time AI performance (e.g., live speech processing) 1.4, 4.2.9

Cons for AI Deployment (The Monolith Bottleneck)

-

Inefficient Scaling: This is the biggest pain point for AI. If your AI model (e.g., a heavy-duty recommendation engine) requires high-end GPUs or massive compute power, you must scale the entire monolithic application—including the parts that only need simple CPU power—just to support that single AI component 3.1.10 This is wasteful and costly 1.1.11

-

Technology Lock-in: The entire application is typically tied to a single technology stack (e.g., Java or Python).12 If a new, high-performance AI framework or database is better suited for the model, adopting it is difficult or impossible 1.5, 4.2.13

-

Slower Deployment and Single Point of Failure: Even a small update to the model’s pre-processing logic requires rebuilding and redeploying the entire, massive application, increasing risk and deployment time 1.1.14 A bug can bring down the whole system 3.4.

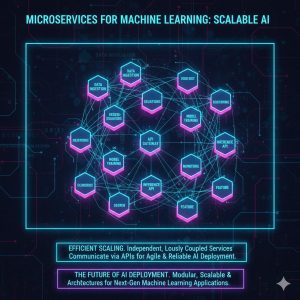

2. Microservice Architecture: Independent Scaling and Flexibility15

Microservices break the application into small, independent services, each with its own codebase, deployment pipeline, and often its own data store.16 Each service handles one clear function (e.g., User Authentication, Recommendation Engine, Payment Gateway).

Pros for AI Deployment (The Case for Microservices)

-

Independent, Cost-Effective Scaling: You can isolate the AI service (e.g., a Large Language Model) and assign it dedicated, high-cost resources (like specialized GPUs) while keeping the low-cost, high-traffic frontend services on standard CPUs 1.1, 2.2. This is the ultimate tool for controlling cloud costs and maximizing resource efficiency.

-

Fault Isolation and Resilience: If the AI model crashes due to a memory leak or a bad input, the rest of the application (e.g., browsing the product catalog) remains operational. This resilience is vital for high-availability systems 1.3, 2.2.

-

Technology Heterogeneity: Different services can use the best tool for the job.17 The AI team can use Python/PyTorch for the model inference service, while the web team uses Go or Node.js for the API Gateway, maximizing productivity and performance 1.3, 4.3.

-

Faster, Independent Deployment: The AI model can be updated and redeployed hundreds of times a day (Continuous Delivery) without affecting other services, enabling faster iteration on model performance 2.1, 2.6.

Cons for AI Deployment (The Complexity Burden)

-

Increased Complexity and Operational Overhead: Managing dozens of services, separate databases, network communication (API calls, latency), and distributed logging requires deep DevOps maturity, container orchestration (Kubernetes), and continuous monitoring 1.3, 3.1.

-

Network Latency: Communication between services involves network calls, introducing latency and the risk of partial failures.18 This can be problematic for synchronous, high-throughput AI services 1.3, 4.2.

-

Data Consistency: Maintaining data consistency across multiple service-specific databases is far more complex than in a single monolithic database (ACID transactions) 2.1.19

3. When to Choose Which for Your AI Deployment

The decision is not absolute; it is a point on the growth curve of your application.

| Architecture | Choose When… | Core Reason for AI |

| Monolith | Startups/MVPs: Small team (under 10), tight budget, validating the product, core AI feature is simple. | Speed and Clarity. The cost of managing microservices outweighs the gains; focus on Time to Market and core logic 3.1, 4.1. |

| Microservices | Rapid Growth: Teams exceed 10-15 developers, different scaling needs across domains, and high-risk components. | Independent Scaling. The AI model requires significant, expensive resources (GPUs) and must be updated frequently, or a failure in the model cannot take down the core business logic 3.1, 4.1. |

| Modular Monolith | Bridge Strategy: The application is growing, but not ready for the full operational complexity of microservices. | Preparation. Structure the code into well-defined modules (e.g., a separate module for the AI) that can be easily “cut out” and deployed as a microservice when necessary 3.2, 4.1. |

Ultimately, most successful large-scale AI platforms (like Netflix or Uber) start with a monolith to establish product-market fit and gradually migrate to microservices as the pain of monolithic scaling becomes worse than the cost of managing the new complexity 3.3.

REALUSESCORE.COM Analysis Scores

| Evaluation Metric | Score (Out of 10.0) | Note/Rationale |

| Monolith: Time-to-Market Score | 9.8 | Unmatched speed for initial development and validating the core AI idea (MVP) 3.1. |

| Microservices: Scaling Efficiency Score | 9.7 | Superior ability to independently scale and allocate specialized resources (GPUs) only where needed 1.1. |

| Monolith: Long-Term Maintenance Risk | 6.5 | Lower score due to high risk of technical debt and complex, high-impact deployments 1.1. |

| Microservices: Operational Complexity Score | 7.0 | High score reflects the significant need for advanced DevOps, monitoring, and expertise 1.3. |

| AI-Model Flexibility (Tech Stack) | 9.6 | Microservices enable using Python/PyTorch for the model and different languages for the rest of the application 1.3. |

| REALUSESCORE.COM FINAL SCORE | 9.2 / 10 | The optimal strategy is a Monolith start with a clear, staged migration path to Microservices, driven by the need for independent AI scaling. |